Databricks Certified Data Engineer Professional: DATABRICKS-CERTIFIED-PROFESSIONAL-DATA-ENGINEER

Want to pass your Databricks Certified Data Engineer Professional DATABRICKS-CERTIFIED-PROFESSIONAL-DATA-ENGINEER exam in the very first attempt? Try Pass2lead! It is equally effective for both starters and IT professionals.

- Vendor: Databricks

- Exam Code: DATABRICKS-CERTIFIED-PROFESSIONAL-DATA-ENGINEER

- Exam Name: Databricks Certified Data Engineer Professional

- Certifications: Databricks Certifications

- Total Questions: 127 Q&As( View Details)

- Updated on: May 27, 2026

- Note: Product instant download. Please sign in and click My account to download your product.

- Q&As Identical to the VCE Product

- Windows, Mac, Linux, Mobile Phone

- Printable PDF without Watermark

- Instant Download Access

- Download Free PDF Demo

- Includes 365 Days of Free Updates

VCE

- Q&As Identical to the PDF Product

- Windows Only

- Simulates a Real Exam Environment

- Review Test History and Performance

- Instant Download Access

- Includes 365 Days of Free Updates

Passing Certification Exams Made Easy

Everything you need prepare and quickly pass the tough certification exams the first time

- 99.5% pass rate

- 7 Years experience

- 7000+ IT Exam Q&As

- 70000+ satisfied customers

- 365 days Free Update

- 3 days of preparation before your test

- 100% Safe shopping experience

- 24/7 Support

Databricks DATABRICKS-CERTIFIED-PROFESSIONAL-DATA-ENGINEER Last Month Results

Free DATABRICKS-CERTIFIED-PROFESSIONAL-DATA-ENGINEER Exam Questions in PDF Format

Related Databricks Certifications Exams

DATABRICKS-CERTIFIED-PROFESSIONAL-DATA-ENGINEER Online Practice Questions and Answers

Questions 1

A Databricks job has been configured with 3 tasks, each of which is a Databricks notebook. Task A does not depend on other tasks. Tasks B and C run in parallel, with each having a serial dependency on Task A.

If task A fails during a scheduled run, which statement describes the results of this run?

A. Because all tasks are managed as a dependency graph, no changes will be committed to the Lakehouse until all tasks have successfully been completed.

B. Tasks B and C will attempt to run as configured; any changes made in task A will be rolled back due to task failure.

C. Unless all tasks complete successfully, no changes will be committed to the Lakehouse; because task A failed, all commits will be rolled back automatically.

D. Tasks B and C will be skipped; some logic expressed in task A may have been committed before task failure.

E. Tasks B and C will be skipped; task A will not commit any changes because of stage failure.

Questions 2

Which statement describes the default execution mode for Databricks Auto Loader?

A. New files are identified by listing the input directory; new files are incrementally and idempotently loaded into the target Delta Lake table.

B. Cloud vendor-specific queue storage and notification services are configured to track newly arriving files; new files are incrementally and impotently into the target Delta Lake table.

C. Webhook trigger Databricks job to run anytime new data arrives in a source directory; new data automatically merged into target tables using rules inferred from the data.

D. New files are identified by listing the input directory; the target table is materialized by directory querying all valid files in the source directory.

Questions 3

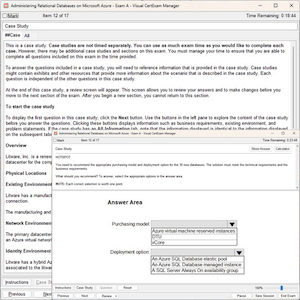

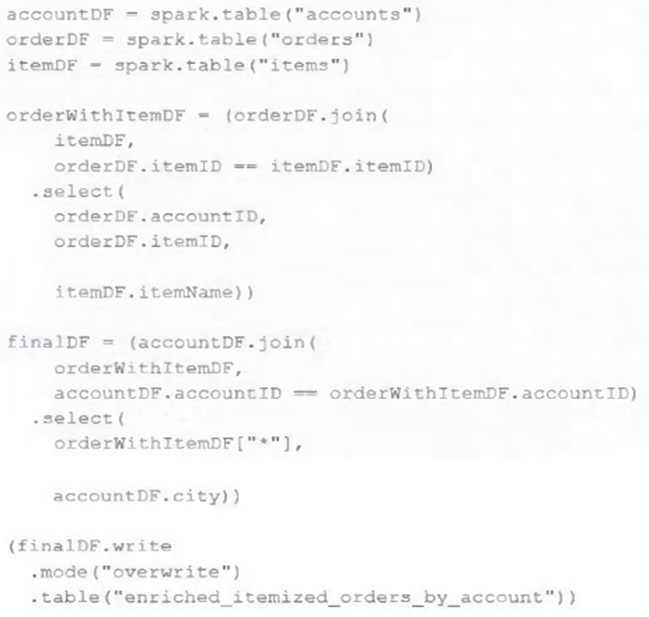

The data engineering team maintains the following code: Assuming that this code produces logically correct results and the data in the source tables has been de-duplicated and validated, which statement describes what will occur when this code is executed?

A. A batch job will update the enriched_itemized_orders_by_account table, replacing only those rows that have different values than the current version of the table, using accountID as the primary key.

B. The enriched_itemized_orders_by_account table will be overwritten using the current valid version of data in each of the three tables referenced in the join logic.

C. An incremental job will leverage information in the state store to identify unjoined rows in the source tables and write these rows to the enriched_iteinized_orders_by_account table.

D. An incremental job will detect if new rows have been written to any of the source tables; if new rows are detected, all results will be recalculated and used to overwrite the enriched_itemized_orders_by_account table.

E. No computation will occur until enriched_itemized_orders_by_account is queried; upon query materialization, results will be calculated using the current valid version of data in each of the three tables referenced in the join logic.

Reviews

-

this file is so much valid, i passed the exam successfully. thanks for my friend introduce this dumps to me.

-

Hi All,i took the exam this week, many of the questions were from this dumps and I swear I'm not lying.Recommend to all.

-

Not take the exam yet. But i feel more and more confident with my exam by using this dumps. Now I am writing my exam on coming Saturday. I believe I will pass.

-

Dump still valid, I got 979/1000 today. Thanks to you all.

-

I love this dumps. It really helpful and convenient. Recommend strongly.

-

Yes, i have passed the exam by using this dumps,so you also can try it and you will have unexpected achievements. Recommend to all.

-

yes, i passed the exam in the morning, thanks for this study material. Recommend.

-

Wonderful. I just passed,good luck to you.

-

hi guys, thanks for your help. I passed the exam with good score yesterday. Thanks a million.

-

This is the valid dumps. I passed mine yesterday. All the questions are from this dumps. Thanks.

Printable PDF

Printable PDF